RORB 6.52 Update

The below verification example was also completed in RORB 6.52 vs Storm Injector using the RORB_CMD executable distributed with RORB 6.52 and was found to match almost exactly.

RORB 6.45 Update

The below example was completed in a previous version of RORB. RORB 6.45 now supports application of ARR Data Hub pre-burst information using GSAM/Jordan temporal patterns. This feature has also been verified against Storm Injector's pre-burst temporal pattern modelling and found to produce very similar results for the below project. The TP 2304 pattern is still adopted for 12 hour critical duration with a peak flow of ~223 m3/s with Storm Injector vs ~221 m3/s for RORB. The small difference is likely due to the pre-burst depth applied which is 8.9mm in RORB whereas Storm Injector adopts 11.6mm which is the median pre-burst depth in the RORB_DataHub.txt file.

Input Data

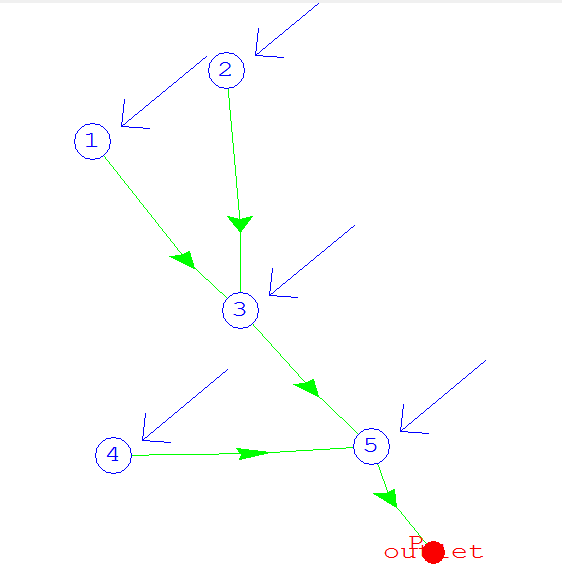

A simple test catchment was created to verify RORB. It consisted of five nodes with a total area of 70 km2. All subcatchments were 20% impervious. Hub Data, IFD Data and Temporal Patterns were downloaded based on the longitude and latitude 150, -30. The data files included:

•RORB_DataHub.txt - A download from the Data Hub website

•CS_Increments.txt - Temporal Patterns downloaded from the Data Hub website

•RORB_IFD.csv - IFD data downloaded from the BoM website

The test catchment was created in the RORB GE tool and was saved as RORB_Test.catg and is shown below.

Completing the Project in RORB

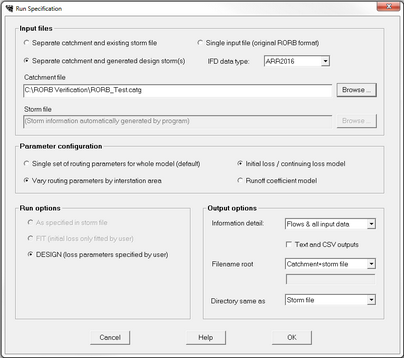

The RORB ARR 2016 configuration tool was used to setup the runs as shown below.

The design rainfall specification was setup with the RORB_DataHub, CS_Increment.csv and RORB_IFD.csv files. Durations from 10 min to 168 hr were selected.

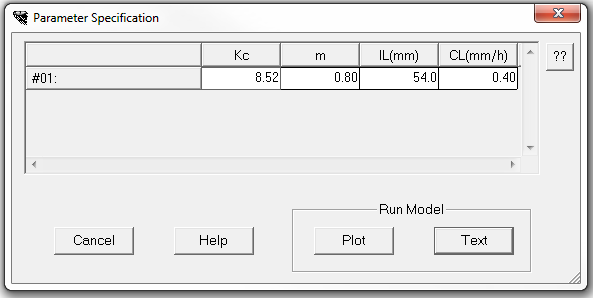

RORB does not currently have the capability to do pre-burst adjustments for initial loss, so initial and continuing loss of 54mm and 0.4mm/hr were applied as per the Hub Data downloaded. A Kc of 8.52 and an m of 0.8 were applied.

At this point RORB can be run and a Text or Plot output generated.

Completing the Project in Storm Injector

To replicate these results in Storm Injector, it is necessary to create a command line version of the RORB *.par file. This was simply done by referencing the RORB_Test.catg file and manually entering the Kc, m and pervious loss rates. The contents of the RORB_Test_Cmd.par file are:

# BEGIN

Cat file :RORB_Test2.catg

Stm file :

Lumped kc:T

Verbosity:3

Lossmodel:1

Num ISA :1

ISA 1 :8.52,0.8

Num burst:1

ISA 1 :54,0.40

# END

Once this file is created, the project can be modelled in Storm Injector with the following steps:

1.Import the *.catg file using '1) Import Model >> RORB (*.catg)' and selecting the RORB_Test.catg file. Click No when prompted to convert coordinates.

2.Import the Hub Data using '2) Get Hub Data >> load text file' and selecting RORB_DataHub.txt

3.Since the Hub Data file did not include the temporal patterns, import these by right click on the Point (2016) Temporal Patterns grid and select Import TPs and select CS_Increments.csv

4.Import the IFD Data using '3) Get IFD Data >> Import ARR 2016 IFD from csv(s)' and select RORB_IFD.csv

5.To match the RORB results, it is necessary to adjust the following settings on the Settings Tab:

a.Since RORB can not do pre-burst adjustment of initial loss, select Global Initial Loss in the Initial Loss section of the Initial and Continuing Loss panel

b.Set 0 and 0 for Impervious IL and CL values

c.Set 'First exceeding mean' for selection of adopted temporal pattern.

6.Select the 1% AEP rare event in the Selected Events panel

7.Click '4) Create Storms'

8.Click '5) Prepare Model Runs' and select the RORB_Test_Cmd.par file

9.Click '6) Run Models'

10.Click '7) Process Results'

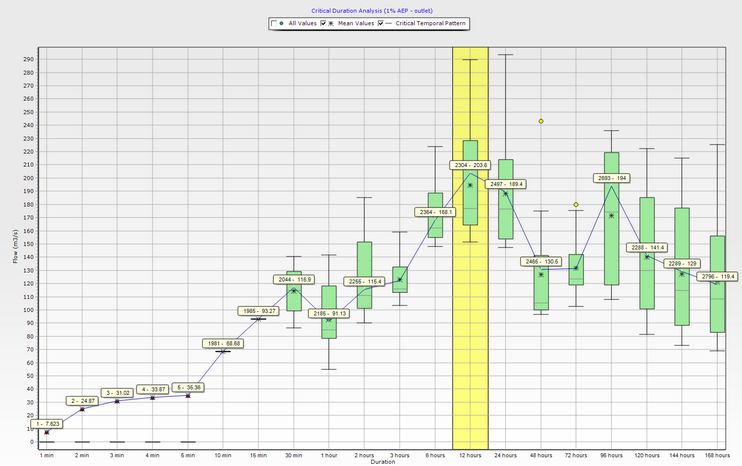

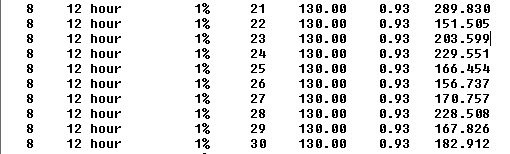

Using this procedure, Storm Injector generates near identical results to RORB. Storm Injector identifies the 12 hour storm as being critical and TP 2304 as being the critical temporal pattern with a peak flow of 203.6 m3/s. This matches the results for TP 23 in the RORB result which is the first TP above the mean for this duration. This verifies that RORB and Storm Injector are calculating the same ARF and applying the temporal patterns in an identical fashion.

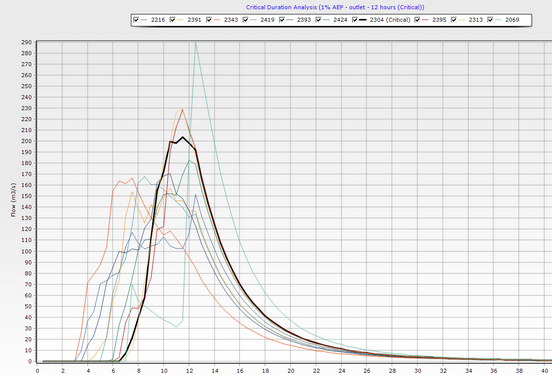

RORB Tabular Results for 12 hour duration storm

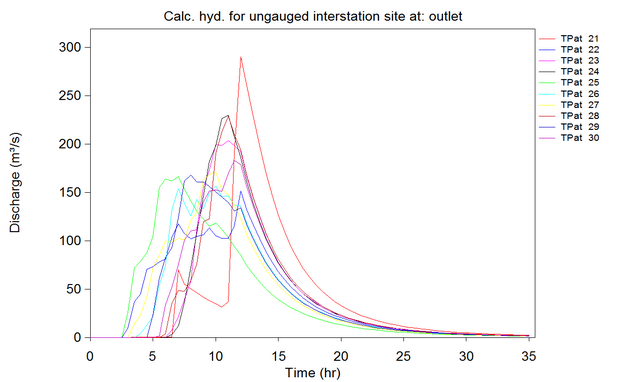

The hydrographs can also be seen to be near identical.

Storm Injector and RORB hydrographs for 12 hour duration storm

Storm Injector identifies the 12 hour storm as being critical.

Comments

RORB does not analyse the results of the individual storm runs to determine the representative temporal patterns and peak flows for each duration or determine the critical duration. At the time of this verification exercise, RORB did not provide for adjusting the initial loss values in accordance with the median pre-burst data as suggested in the ARR 2016 guidelines (Note, RORB 6.45 now does allow pre-burst modelling using in-built pre-burst temporal patterns). Finally, RORB does not allow for more than one IFD dataset to be used to represent different subcatchments in the project. As such, a fully spatially varying verification could not be undertaken.